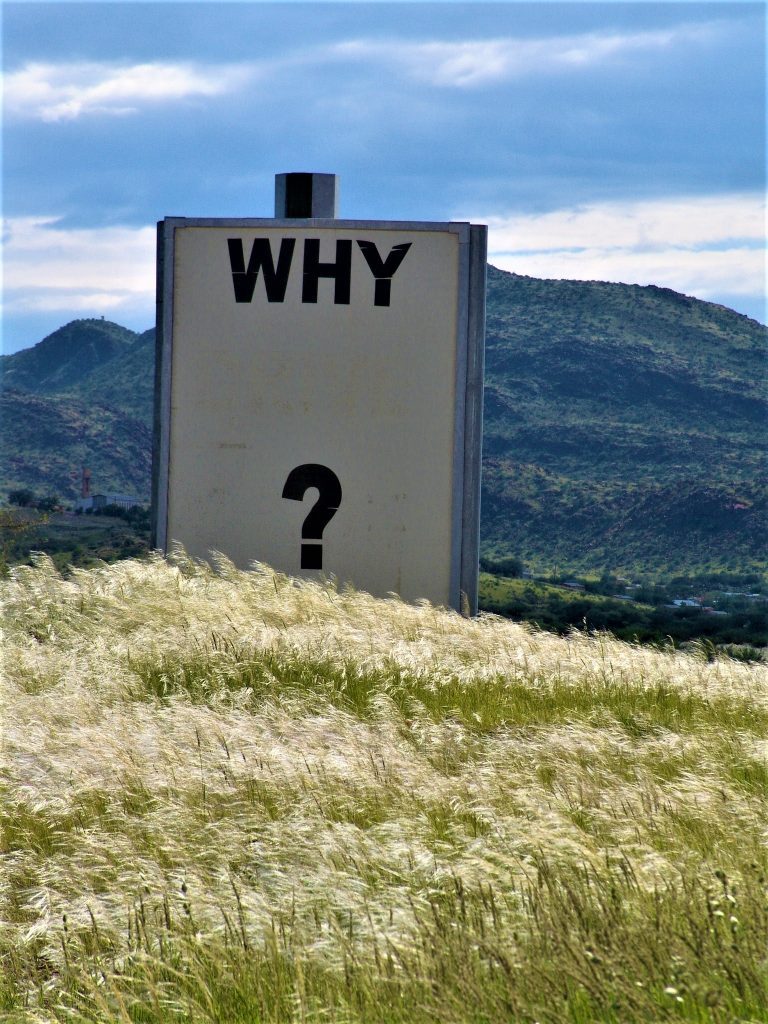

There is growing concern that artificial intelligence (AI) systems will make decisions we do not understand, and there is a call to have these systems be able to report how decisions are made. If an autonomous vehicle stops for no apparent reason, or has an accident, there is an expectation that a black-box will report back the data trail and analysis that led to that action. In short, we are asking for AI rationale that can be used to improve operations, or attribute liability. This effort is doomed to failure, and may lead to greater problems.

Let’s start with human decision making. We do it all the time. Research shows that we make decisions before we are consciously aware of making the decision. So what do people do when asked about why they made a decision? We provide a “rationale” for our choice. Unfortunately, we may not be aware of why we made the choice, and we also have a natural inclination to support our choices. If you have watched a hypnotist perform, they provide a suggestion for some irrational action, and after the individual has responded to the trigger for that action, they tend to make up a rationale, or at best they respond “I don’t know.” The reality is we can ask humans why they took an action, and they are not reliable reporters of their process.

So back to our quest for AI rationale. For some “brute force” AI systems, such as Deep Blue’s massive look-ahead process to become a chess champion, the rationale is fairly straightforward, although some chess-specific psy-ops may have been incorporated into the software. Of course Deep Blue could not articulate an answer to “why?” With AlphaGo, the initial system that defeated grand-masters, the process was deep-learning, not brute force. This initial system was taught by thousands of recorded games, and then millions of self-play games. The 2017 version of this system, AlphaGo Zero, was trained only on self play, and not prior human games. This version passed all it’s predecessors after forty days of play, having passed the initial grand-master AlphaGo in three days. The success of this new system is attributed to the learning models applied (self-play providing a matched sparing partner, integrating Monte-Carlo tree selection with multiple evaluation methods in a residual neural net) — none of which provides clarity to the selection of moves that results in a consistently winning system.

The Down Side of “Why”

For better or worse, as humans develop systems, including AI systems, they unconsciously incorporate their own biases. Given that human developers may not be able to explain the decisions of such systems, it is unlikely that an AI could provide an accurate rationale. But here we can project our human experience one more step forward. Our conscious awareness of our decisions may just be the story we create to explain what we have done, making story-telling, and not rationale decision making, a key aspect of consciousness.

So where does this leave our AI? If we present the weightings for each neural net node to the jury as the description of why the robot acted in that way, it will not fly far, albeit the accurate assertion. So we will develop a rationale, a story, a plausible scenario that might explain the action. It is unlikely we will point to our own biases as part of the narrative.

So move to the next generation AI, merging Watson with it’s ability to respond to questions, with AlphaZero and it’s ability to dominate the game. We ask “why did you make that move?” Will the AI spew out a table of numbers? Will it discover some deeper truth about it’s real nature and expose its inner soul? Or will it provide a rationale for it’s actions? If that rationale is constructed to match the context of the question we may have just taught the AI to lie.

JOIN SSIT

JOIN SSIT