Presumably we will reach a tipping point when Intelligent Devices surpass humans in many key areas, quite possibly without our ability to understand what has just happened. A variation of this is called “the singularity” (coined by Vernor Vinge, and heralded by Ray Kurzweil). How would we know we have reached such a point? Does this herald the AI apocalypse? One indicator might be an increased awareness, concern, and discussion about the social impact of AIs. There has been a significant increase in this activity in the last year, and even in the last few months. Here are some examples for those trying to track the trend (of course Watson, Siri, Google Home, Alexa, Cortana and their colleagues already know this).

- IEEE Consumer Electronics magazine, April 2017

- IEEE Computer Society Edge magazine April 2017

- National Geographic magazine, April 2017

- IEEE’s initiative for ethical considerations for AI systems

- Yuval Noah Harari’s Homo Deus book and it’s projections

- Articles in many major newspapers on Big Data, Algorithms, AI, autonomous vehicles,

- A short history of AI by CS Past President David Allan Grier is available as well

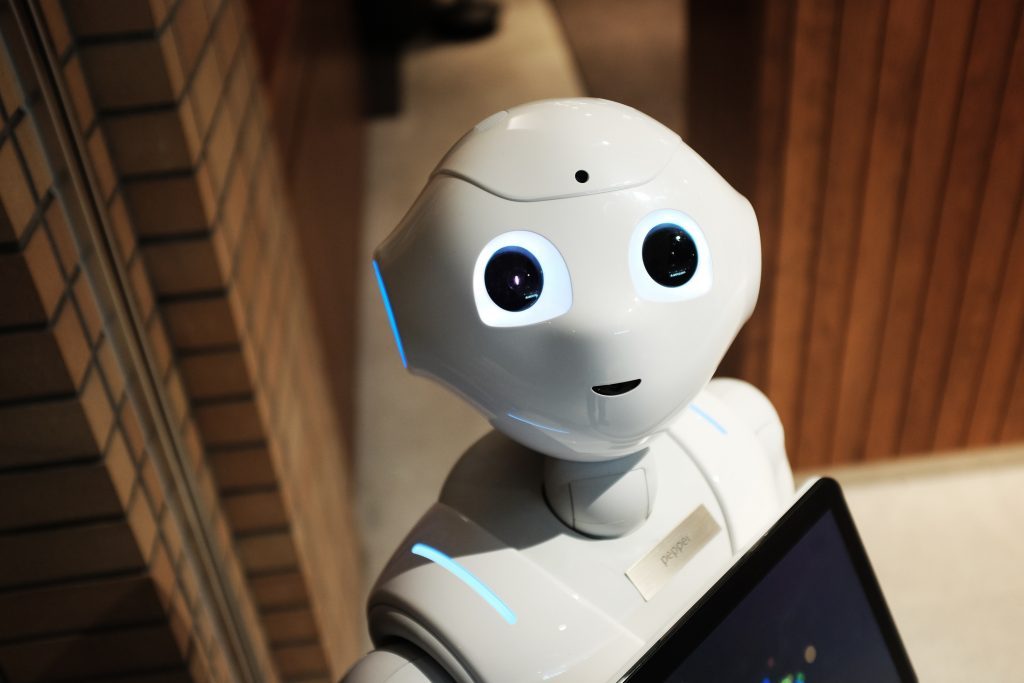

A significant point made by Harari is that Artificial Intelligence does not require Artificial Consciousness. An intermediate stage might include Artificial Emotional interactions. A range of purpose built AI systems can individually have significant impact on society without reflecting what the IEEE Ethics project recognizes to as “Artificial Generalized Intelligence.” This means that jobs, elections, advertising, online/phone service centers, weapons systems, vehicles, book/movie recommendations, news feeds, search results, online dating connections, and so much more will be (or are being) influenced or directed by combinations of big data, personalization, and AI.

What concerns/opportunities do you see in this, “brave new world”?

Also see Apocalypse Deterrence.

JOIN SSIT

JOIN SSIT